Escape 'Pilot Purgatory': 5 Radical Shifts in AI Governance

If you've ever watched an exciting AI pilot—one that promised to revolutionize a workflow—grind to a halt before reaching production, you've witnessed "Pilot Purgatory."

It's a common innovation dead end where promising technology is shelved because the associated risks feel unmanageable to compliance and security teams.

This isn't a failure of technology. It's a failure of governance. The operating models we use to manage risk were built for a deterministic world of predictable software. Generative and Agentic AI operate on entirely different principles, rendering our old playbooks obsolete.

To break free, we need a new way of thinking. The Enterprise Anchors™ framework offers a path forward by reimagining governance not as a pre-deployment gate, but as a dynamic, runtime system.

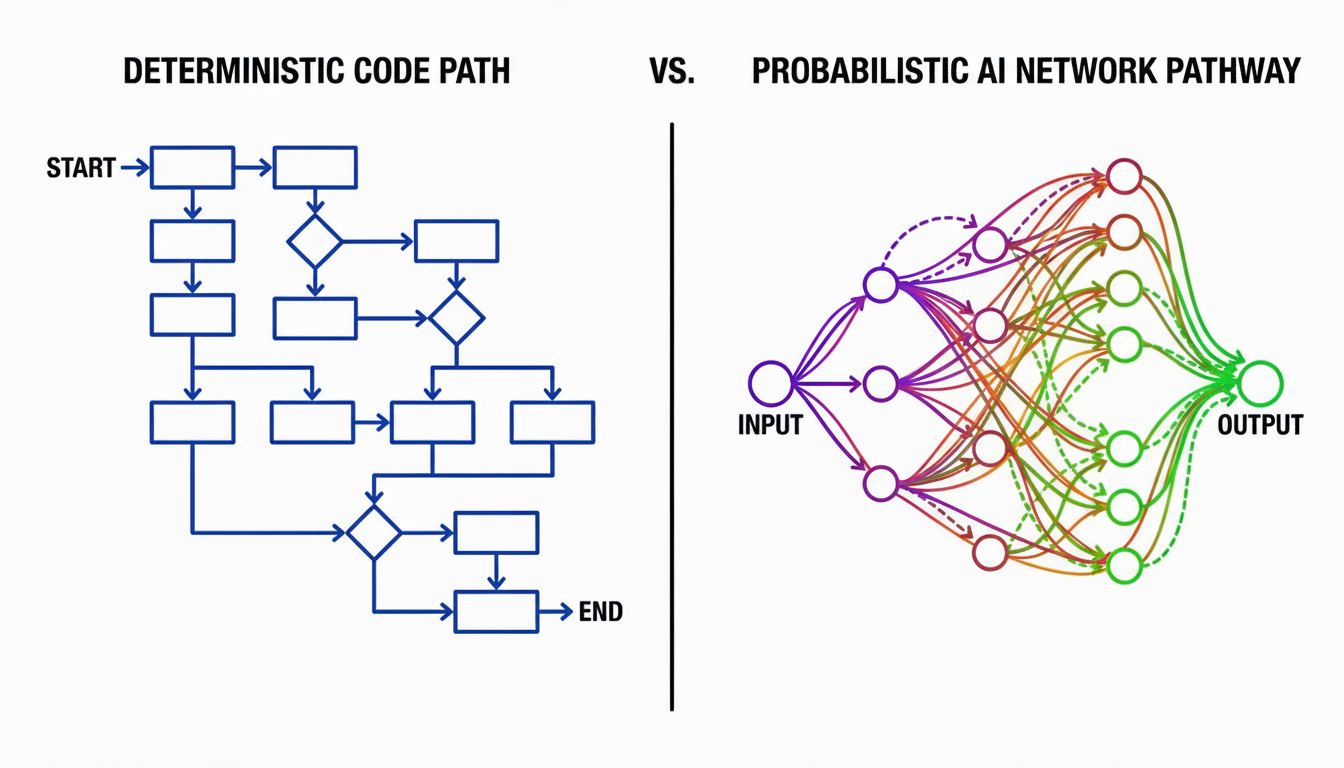

1. Your Old IT Playbook Is Obsolete

The core problem stalling AI adoption is the "Deterministic vs. Probabilistic Rift."

For decades, traditional software governance was built on a simple, predictable contract: f(x)=y. Given the same input, the code would always produce the same output. This allowed risk to be managed almost entirely before deployment.

Generative AI shatters this contract. It operates on a probabilistic reality: P(y|x,context).

You cannot test for an infinite number of potential user prompts or data contexts beforehand, making old approval methods dangerously insufficient.

2. Stop 'Trusting' AI. Start Calculating It.

In this new model, we move from subjective trust based on a successful demo to calculated trust based on statistical proof.

This is achieved through the "Autonomy Bundle," a concept that functions like a real-time "credit score" for an AI agent. By directly applying quality control principles specifically Six Sigma, the system continuously calculates the agent's reliability.

- Safety Minima: A binary pass/fail check for non-negotiable breaches (PII, Jailbreaks).

- Operational Sigma: A statistical measure of defects per million opportunities (DPMO).

- Flow & SLOs: Unit economics and latency tracking.

- Security KRIs: Measuring the threat landscape (e.g., spikes in attacks).

- Governance Completeness: Ensuring the "Black Box Recorder" is online.

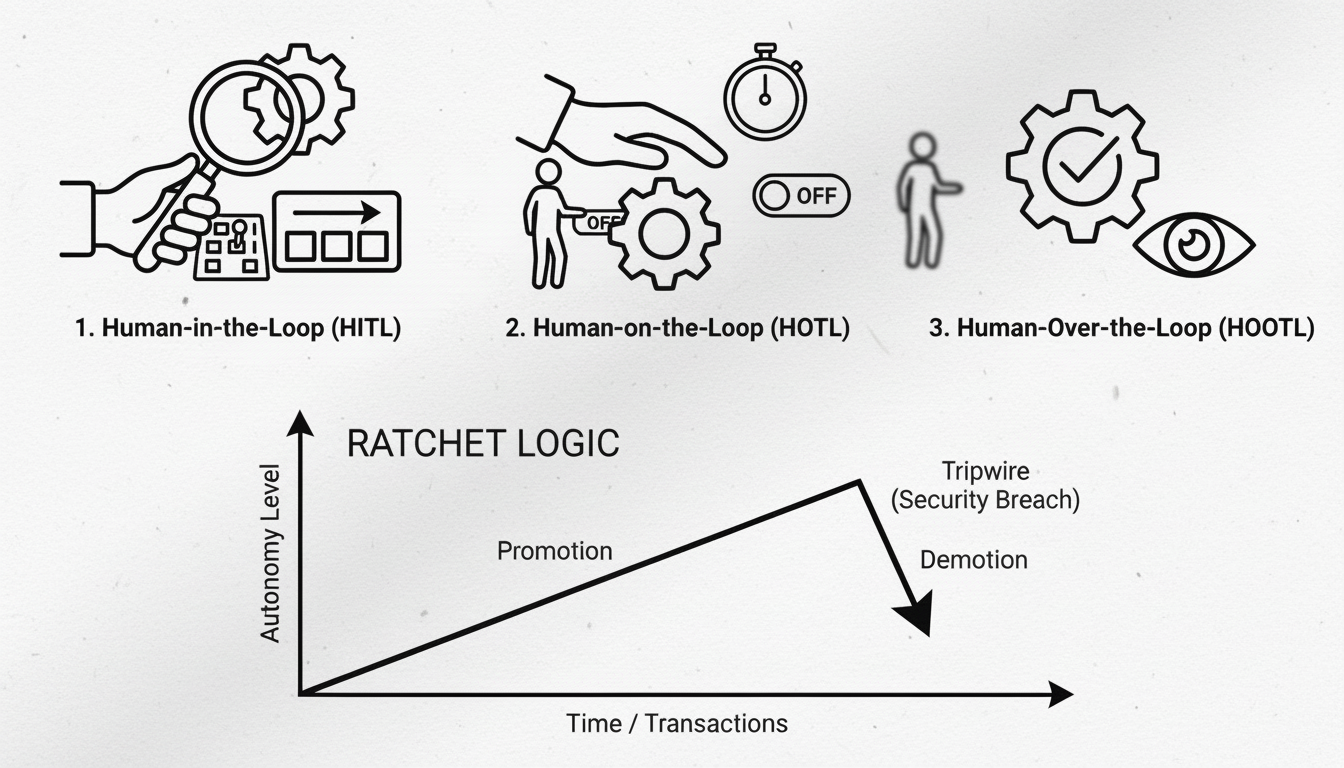

3. Autonomy Isn't an On/Off Switch—It’s a Sliding Scale

Instead of a binary "live" vs. "not live" state, agentic systems can operate on a spectrum of human oversight.

- Human-in-the-Loop (HITL): The AI recommends; the Human approves. (Safe for Day 1).

- Human-on-the-Loop (HOTL): The AI executes; the Human supervises with a "Kill Switch."

- Human-Over-the-Loop (HOOTL): The AI operates autonomously; the Human audits retrospectively.

This system is governed by "Ratchet Logic." It is hard to earn autonomy (requires high Sigma), but instant to lose it (if a Safety Gate fails).

4. Your Policy Document Is a 'Dead Artifact'

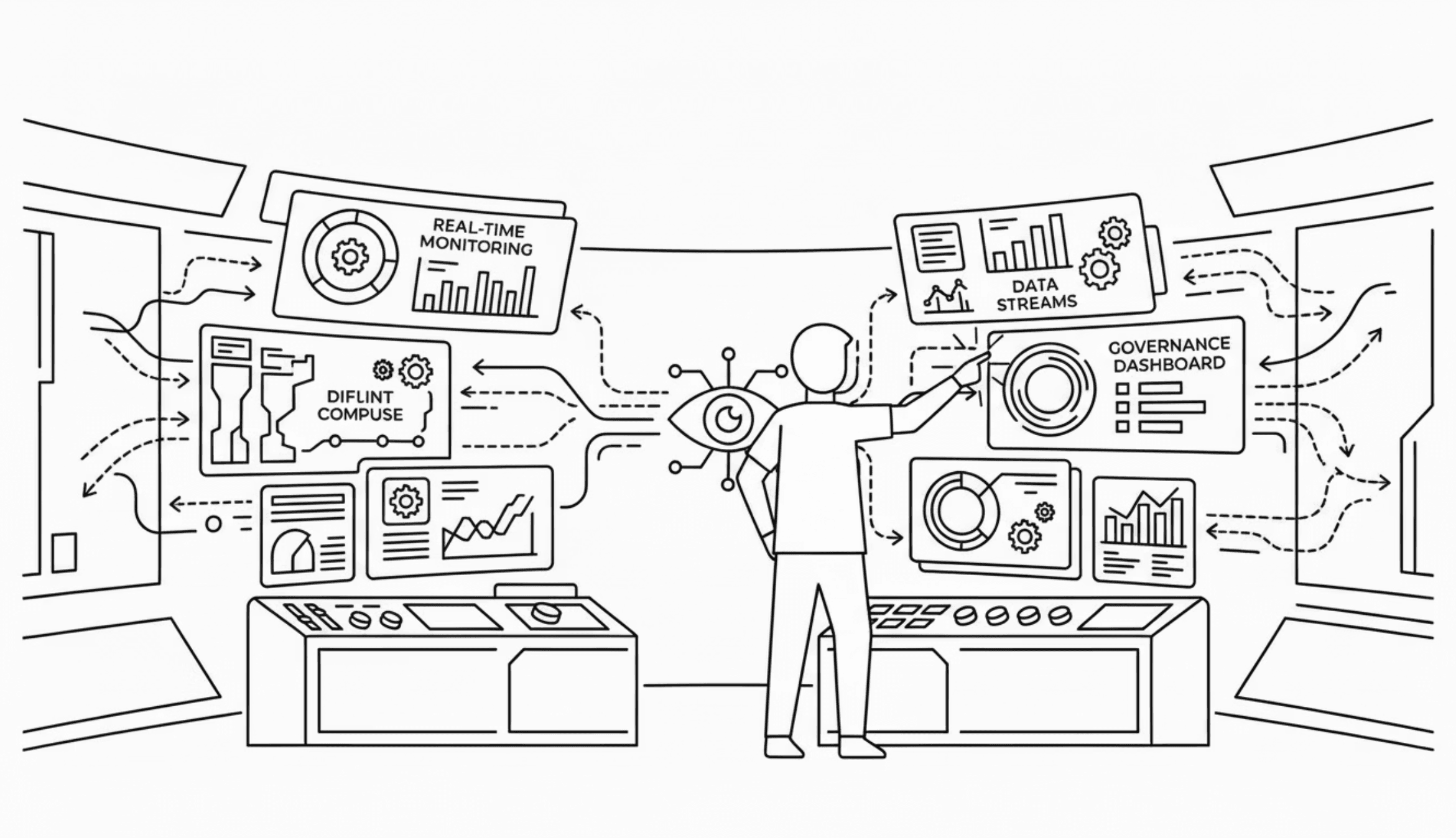

Traditional governance relies on static policy documents—PDFs or wikis—that are reviewed annually. These are useless for an agent making decisions in milliseconds.

The solution is "Policy-as-Code."

We don't rely on a fragile system prompt to make the LLM "police itself." Instead, coded policies (like OPA/Rego) are enforced automatically at "Time-of-Use" by a deterministic architectural component (The Anchor) that sits alongside the AI.

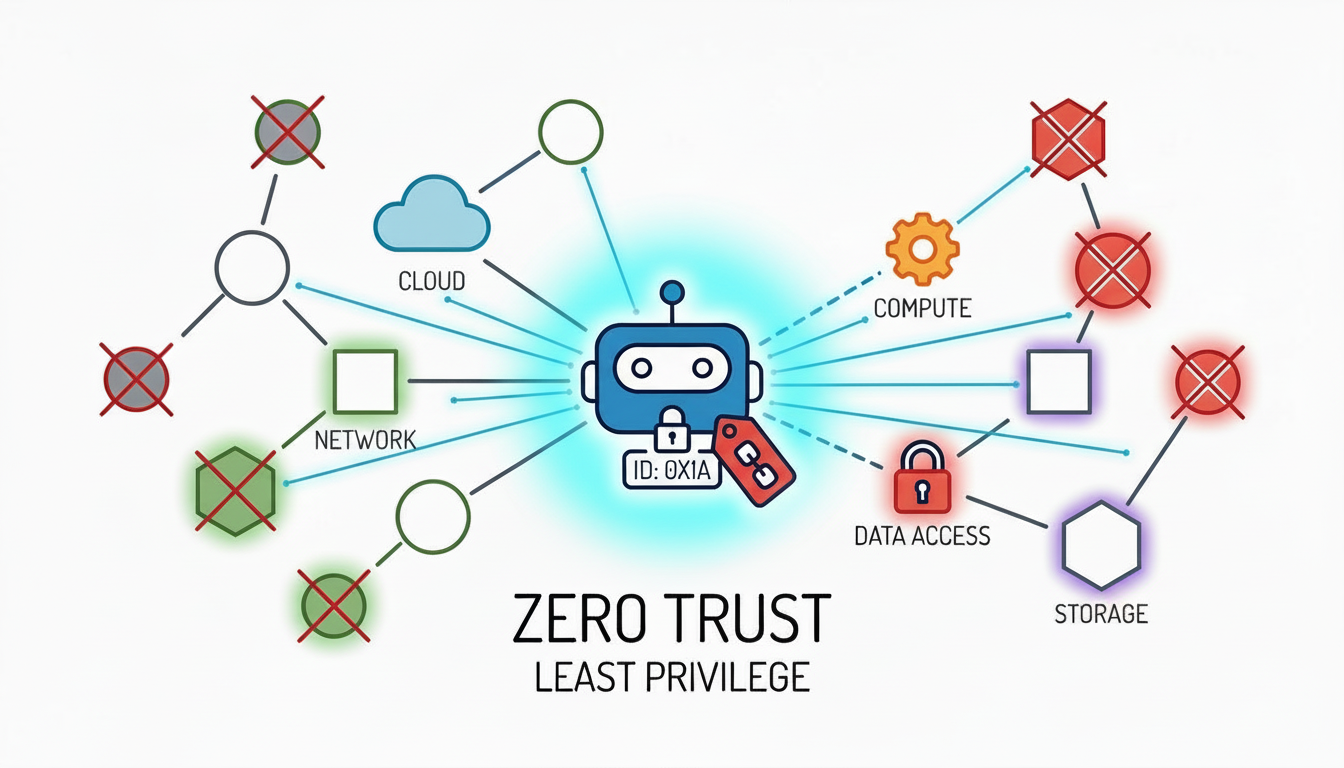

5. Treat Your AI Like an Untrusted User

Finally, we apply "Zero Trust" principles directly to AI agents.

Because an agent can be hijacked via prompt injection, it should never be implicitly trusted, even if it's inside your firewall. Every AI agent is given a unique, short-lived cryptographic identity (SPIFFE ID) and is granted absolute "Least Privilege."

Conclusion: From Bottleneck to Enabler

These shifts transform AI governance from a static bottleneck into a dynamic enabler. By moving governance out of paper documents and into the architecture itself, we can build systems that are compliant by design.

Ready to dive deeper? [Read the full Technical Standard] or Download the Paper on SSRN.