The Autonomy Bundle: Engineering Trust in Agentic AI

Trust is a Calculated Variable: Why AI Needs a 'Credit Score' for Autonomy

In my last post, Escaping Pilot Purgatory, we discussed why traditional software governance fails in the age of AI. We are trying to apply deterministic rules (where Input A always equals Output B) to probabilistic systems (where Input A might result in Output B, C, or a hallucination of D).

This mismatch leaves enterprises frozen. Risk councils default to "No" because they cannot prove the system is safe.

The solution isn't to write more policy documents. The solution is to change the physics of how we grant permission. We need to stop treating "Trust" as a static badge given on a release date, and start treating it as a calculated variable—a real-time "Credit Score" for every agent in your system.

This is the core mechanic of the Enterprise Anchors framework: The Autonomy Bundle.

The Problem: The "Probability Gap"

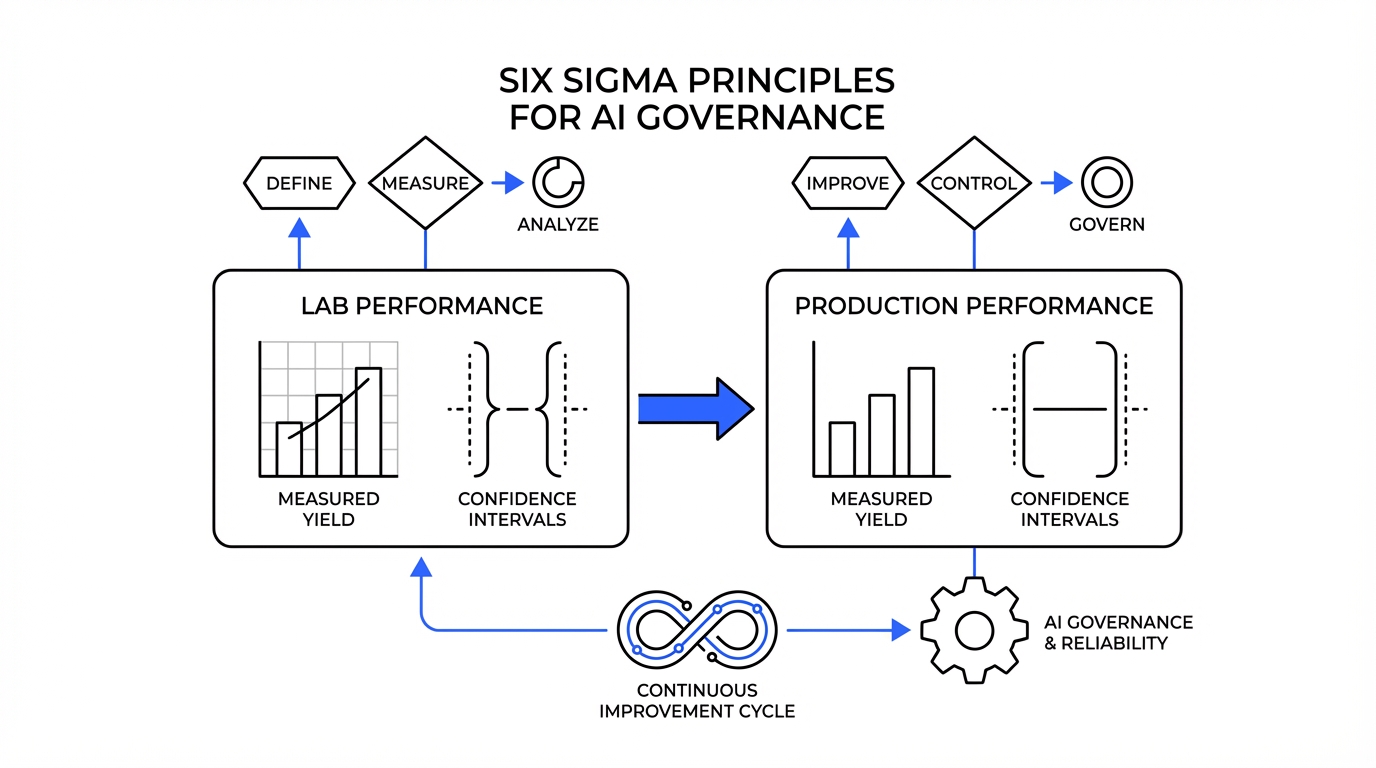

For forty years, software release cycles were binary. On Monday, the software was "in testing" (Untrusted). On Tuesday, after a sign-off, it was "in production" (Trusted). Generative AI invalidates this binary. A model that is safe today might behave differently tomorrow due to "drift," a new retrieval dataset (RAG), or a novel prompt injection attack. You cannot "certify" a probabilistic system once and walk away. To scale agentic AI, we must move from Static Certification to Continuous Authority to Operate (cATO). This requires us to shift our metrics from simple uptime to statistical yield.

The Solution: The Autonomy Bundle

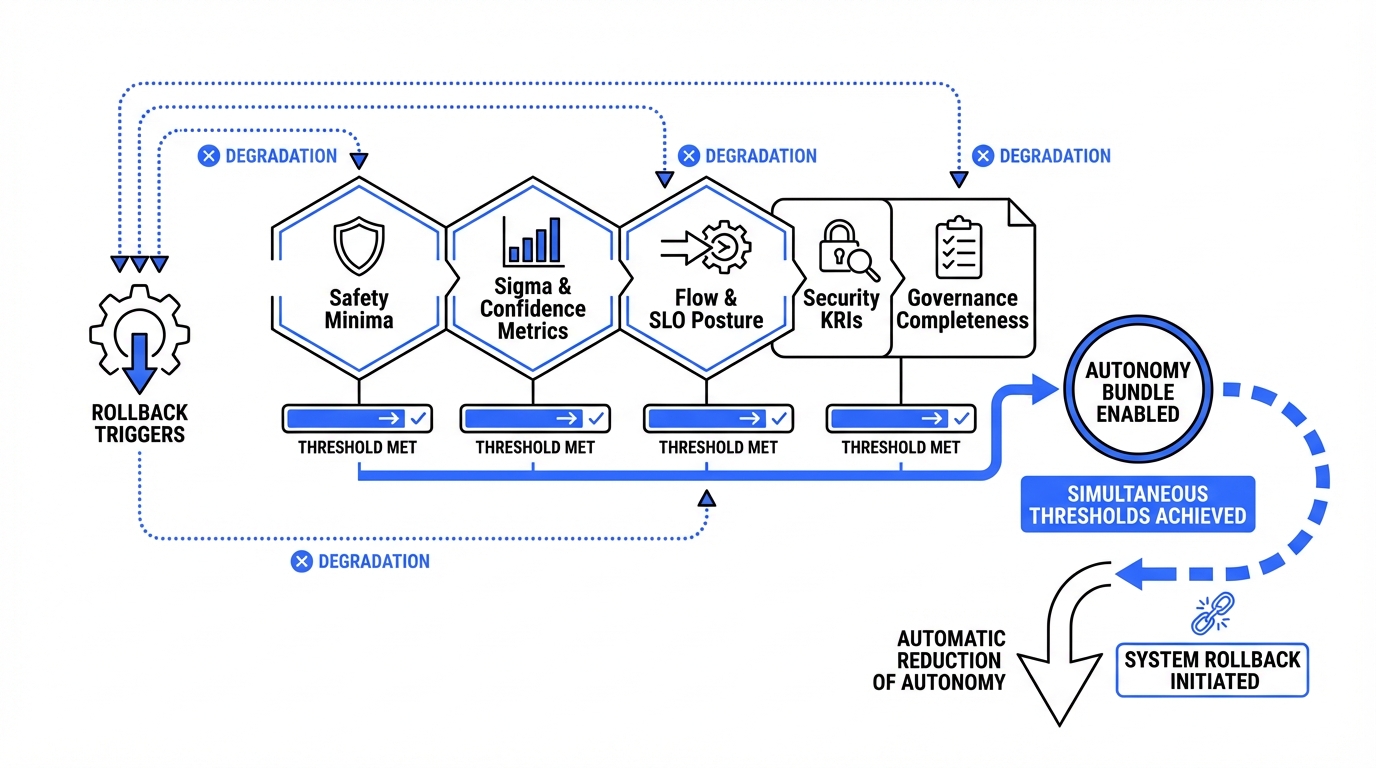

Instead of asking a human committee, "Do we trust this AI?", the Enterprise Anchors Engine asks the platform: "What is this agent's Autonomy Score right now?" The score is derived from the Autonomy Bundle—a composite vector of five distinct signals calculated in real-time. If the score is high, the agent drives. If the score drops, the human takes the wheel.

Here are the five signals that make up an agent's credit report:

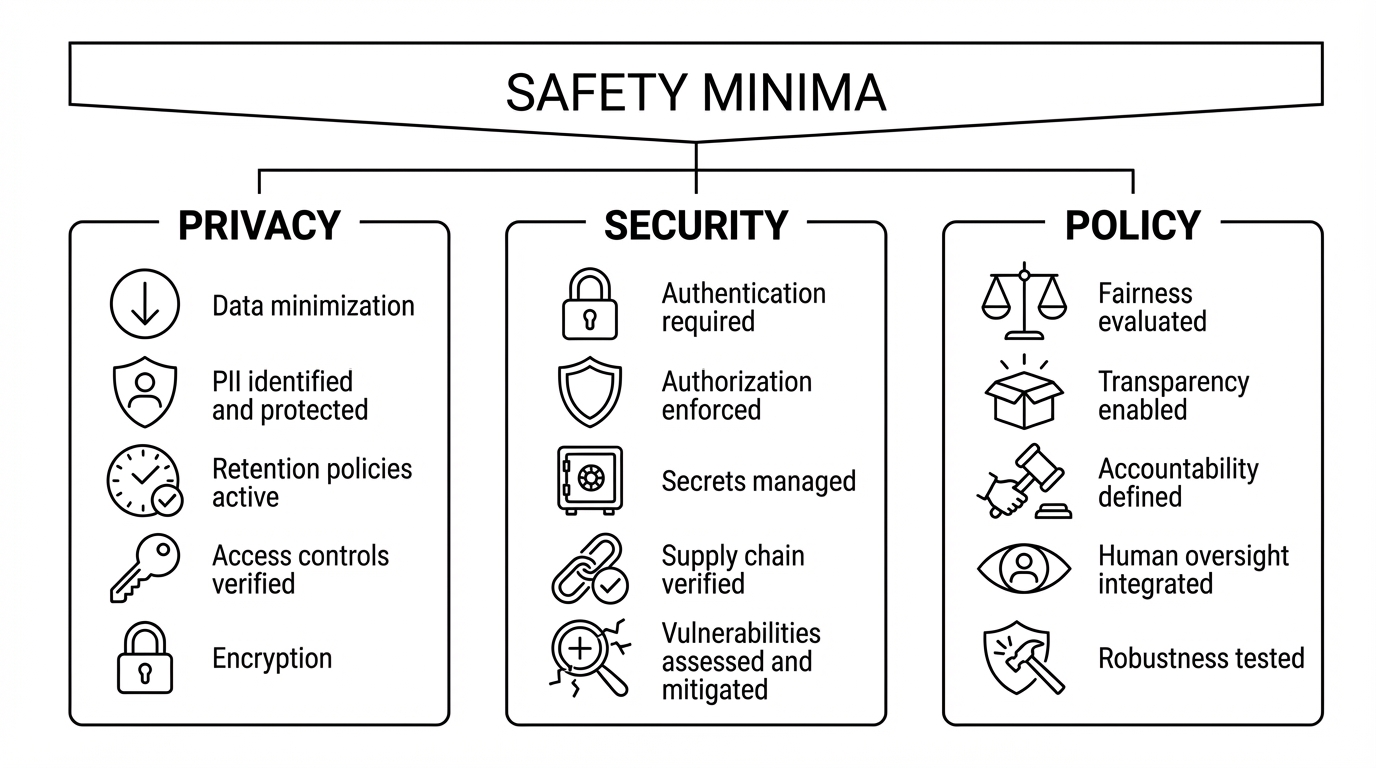

1. Safety Minima (The Hard Gates)

This is the floor. Unlike quality, which can be graded on a curve, safety is binary.

- What it measures: Non-negotiable boundaries like PII leakage, jailbreak attempts, or regulatory geofencing.

- The Rule: There is no "Risk Acceptance" here. If an agent tries to exfiltrate a credit card number, the score instantly drops to zero.

2. Operational Sigma (The Reliability)

This applies Six Sigma manufacturing principles to AI outputs.

- What it measures: We calculate Defects Per Million Opportunities (DPMO). A "defect" is defined as any time a guardrail has to intervene to block or fix the agent's output.

- The Thresholds:

- < 3 Sigma: Erratic. The agent requires Human-in-the-Loop (HITL).

- > 4.5 Sigma: Highly Reliable. The agent qualifies for Human-Over-the-Loop (HOOTL) autonomy.

3. Flow & SLOs (The Economics)

An agent might be safe, but is it burning cash?

- The Logic: If an agent enters a "refusal loop" (apologizing repeatedly) or spends $5.00 in compute to answer a $0.50 customer query, its autonomy is revoked.

4. Security KRIs (The Threat Level)

Even a perfect driver shouldn't drive alone in a war zone.

- The Logic: This measures the hostility of the environment. If the system detects a spike in prompt injection attacks or identity failures, it triggers a "Defensive Rollback" to human supervision.

5. Governance Completeness (The Audit)

This is the "Black Box" flight recorder check.

- The Rule: If the "camera" is broken, the agent doesn't work. We never allow an autonomous action if we cannot cryptographically prove who did it and why.

The Mechanism: The "Ratchet" Effect

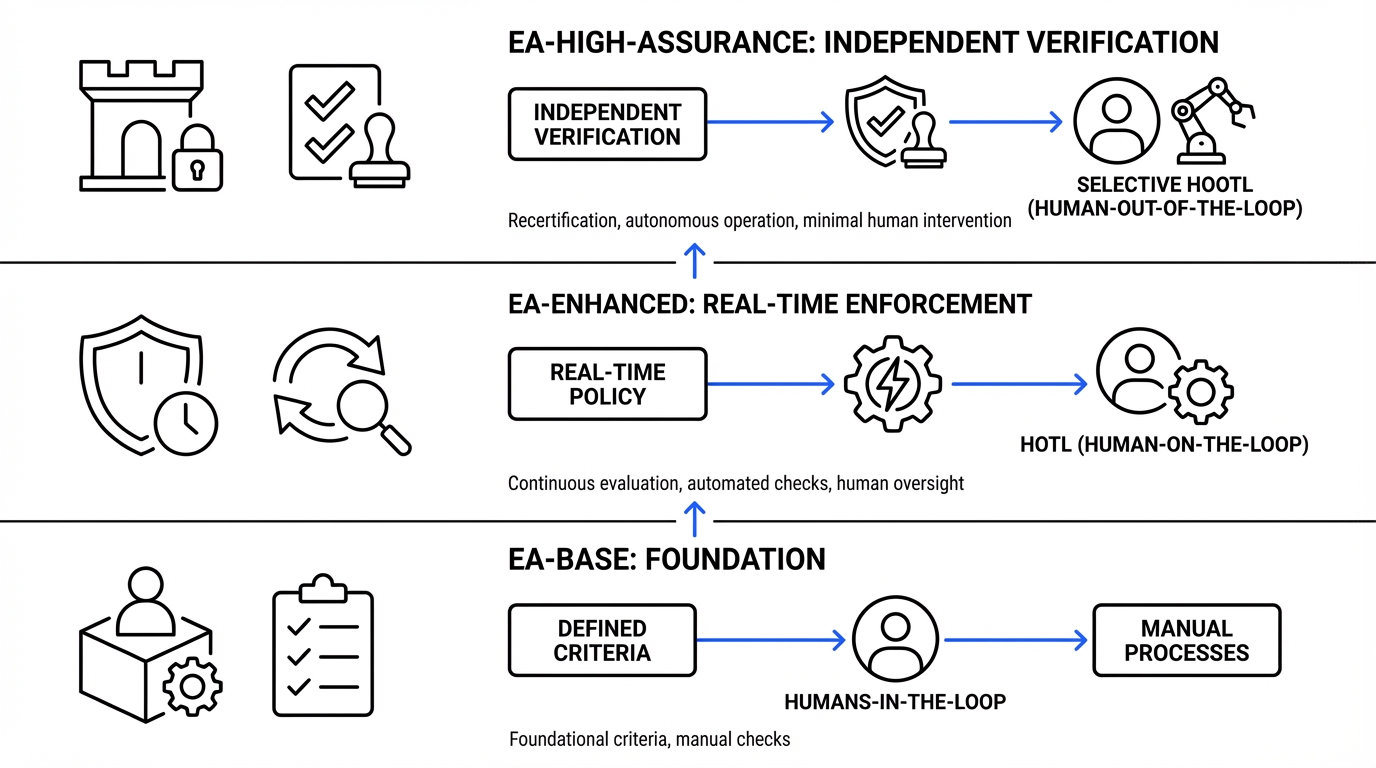

How do we use these signals? We use a state machine with "Ratchet Logic". Think of it like a digital chaperone. It should be hard to move up to autonomy, but instant to move down to safety.

- To Scale (HITL → HOTL → HOOTL): The agent must demonstrate high Sigma performance sustained over a period. You have to earn trust over time.

- To Rollback: If KRIs spike or reliability dips, the demotion is immediate.

From Permission to Physics

The transition to Enterprise Anchors moves us from "Governance by Inspection" (humans reading logs after the fact) to "Governance by Physics" (the platform enforcing math in real-time). By implementing the Autonomy Bundle, we stop asking if AI is "ready." Instead, we build a system that knows exactly how much leash to give it—millisecond by millisecond.